Best GPUs for AI & LLM Workloads (Full Guide)

In the first quarter of 2026, the best GPUs for AI workflows are conquered by NVIDIA’s lineup.

For data center training, the NVIDIA H200 (80GB/141GB) and B200 (192GB) have top performance, while the RTX 5090 (32GB GDDR7) and RTX 6000 Ada (48GB) lead for workstations. For local development, the RTX 4090 (24GB) is excellent, with RTX 4060 Ti (16GB) serving as a strong, budget-friendly entry point.

🤖 AI Overview:

The best GPUs for AI workflows 2026 are RTX 3060, 4060 Ti, and 5060 for small models, NVIDIA RTX 4080, 5080, and 5090 for mid-level, and A100, H100, and B100 for enterprise and data center level.

Why GPUs Dominate AI Compute in 2026

GPUs have dominated AI computing since 2025 due to their parallel processing capabilities.

Think of it this way: CPUs are sequential, meaning they perform the task One, then task Two. But GPUs perform thousands of tasks simultaneously.

This is especially useful for AI neural networks, where thousands of simple operations like matrix multiplication should be repeated many times across a large dataset. This breakthrough led to a significant reduction in the time required to train AI models.

Understanding GPU Compute Architecture

The 5 main parameters of the GPU compute architecture that you should consider when buying a GPU server are:

1. Parallel Processing Units (CUDA Cores / Stream Processors)

While Tensor Cores excel at AI tasks, CUDA Cores (NVIDIA GPUs) and Stream Processors (AMD GPUs) are the primary, general-purpose parallel processing units. The number of CUDA cores represents the GPU’s potential to handle workloads.

2. Memory (VRAM)

Optimized for rapid data transfer, VRAM is the warehouse of the GPU, storing 3D models, image data, and textures that the GPU works with. This way, VRAM directly affects the speed of your AI model.

3. Cache Hierarchy

GPUs have a cache hierarchy (L1, L2, and Shared Memory) to perform tasks better and faster. L1 cache is the fastest cache, L2 has a larger pool but is slower, and shared memory makes it possible for computing units to communicate.

4. Memory Bus and Bandwidth

Memory Bus defines the data flow rate between the GPU and VRAM. Besides, the higher the GPU memory bandwidth, the faster the data transfer.

5. Clock Speed

Clock speed (in MHz or GHz) represents the speed of the GPU cores performing various tasks. You can speed up your workload by having a higher clock speed or more cores.

Higher clock speed and fewer cores are ideal for gaming setups, while lower clock speed and many cores are the best option for AI workloads.

What Are Tensor Cores and Why Do They Matter? (Tensor Cores detailed)

Tensor Cores are processing units in the NVIDIA GPUs that accelerate computing tasks and deep learning, resulting in faster AI training and inference. Hence, we can say that the Tensor Cores are the major units of modern GPUs that directly affect the performance and speed of the AI models.

Tensor Cores matter because they excel at parallel computation in a world where deep learning, diffusion models, and neural network workloads merely mean matrix multiplication and computation.

In such workloads, the same task (calculation) is repeated over and over, and with parallel processing, Tensor cores can handle such tasks efficiently.

Compute Precision Explained (BF16, TF32, FP16, FP32, FP64, FP64 Tensor)

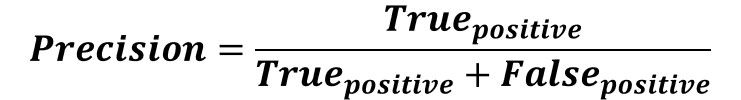

Compute Precision in machine learning is a ratio calculated by the number of correct classifications (True Positive) and the total number of classifications (both True Positive and False Positive).

A False Positive (Negative) in compute precision is the case in which a negative sample is classified as Positive.

The Compute Precision reflects the reliability of the model and its classification accuracy, where the ideal compute precision exists when all the Positive samples and no Negative sample are classified as positive.

-

FP32 (Float)

FP32 or FP32 Float both refer to single precision floating point, which is the traditional default for deep learning computations. FP32 uses 32 bits of memory to show a floating-point number by assigning 1 bit to the sign, 8 bits to the exponent, and 23 bits to the mantissa.

Practical example: 1 10000010 11101100000000000000000

In this compute precision output, “1” is the sign, “10000010” is the exponent, and “11101100000000000000000” is the mantissa. The number is “0.15625”.

-

FP16 (Half)

FP16 (half) or half precision floating point uses 16 bits (half of FP32 bits) to represent a floating-point number. It assigns 1 bit to the sign, 5 bits to the exponent, and 10 bits to the mantissa.

FP16 is faster and implements half the memory compared to FP32 since it utilizes fewer bits. FP16 is often used in mixed-precision compute to improve performance.

-

BF16

BF16, or Brain Floating Point 16, has the FP32’s stability and FP16’s efficiency. It allocates 1 bit for sign, 8 bits for exponent (equal to FP32’s), and 7 bits for mantissa (less than FP32). This allocation grants BF16 the same dynamic range as FP32 but with lower precision.

-

FP64 (Double)

FP64, double precision floating point format, allocates 1 bit for the sign, 11 bits for the exponent, and 52 bits for the mantissa. FP64 is used for heightened accuracy in financial, scientific, and engineering workloads.

-

FP64 Tensor (Double-Precision Tensor Core)

The “FP64 Tensor” term refers to the case where a simulation renders a numeric model using FP64 calculations. As the term suggests, this simulation is made by GPU’s Tensor cores.

Some of NVIDIA’s data-center-level GPUs, such as H200, H100, A100, A30, and B200, come equipped with FP64 Tensor (Tensor Core GPU) and are ideal for training and inferring a simulation or scientific AI model.

-

TF32

TF32 (TensorFloat-32), specifically designed for AI and deep learning, is a bridge between high-precision FP32 and fast but less precise BF16 and FP16. It allocates 1 bit for the sign, 8 bits for the exponent, and 10 bits for the mantissa.

How Precision Modes Impact Training & Inference

| Precision Format | Typical Usage | Speed | Memory | Accuracy |

|---|---|---|---|---|

| FP32 | Training Baseline | Fast | Medium | Medium |

| FP16/BF16 | Training/ Inference | Very Fast | Low | High (Mixed)/ Low (Single) |

| FP64 | Scientific/ Simulation | Very Slow | High | Very High |

| TF32 | Faster AI training/inference | Fast | Medium | Medium |

Best GPU Specs for Machine Learning

You should consider the type of your AI workload when choosing the best GPUs for ML (machine learning). While throughput is of the essence for training workloads, latency is critical for inference. Also, the quantity of the parameters affects your decision on the GPUspecs for ML.

The best GPU specs for machine learning in 2026 are:

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Small LLM inference (<7B) | 8–12 GB | RTX 4060 / 3060 |

| Medium LLM training (7B) | 24 GB | RTX 4090 |

| Large LLM inference (14B) | 40–48 GB | L40S / A40 |

| Distributed training (+70B) | +80 GB | A100 / H100/ B200 |

Best GPU Specs for Fine-Tuning (LoRA / QLoRA)

LoRA (Low-Rank Adaptation) and QLoRA (Quantized LoRA) are fine-tuning techniques for LLMs. Since LoRA implements 16-bit (FP16/BF16) precision and QLoRA 4-bit (Base) plus 16-bit (Adapters) precision, LoRA is faster, has a medium-to-high VRAM usage, and is ideal for 7B to 20B models, whereas QLoRA is a bit slower, demands little VRAM (4x less than LoRA), and is best for +30B models.

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Entry level (<7B) | 10-12 GB | RTX 3080/4070 |

| Mid level (7B-13B) | 24 GB | RTX 3090/4090 |

| Mid to Enterprise (13B-30B) | 40-48 GB | A100 (40GB) / L40S (48GB) |

| Enterprise level (>30B) | 80 GB | A100 (80GB) / H100 |

Best GPU Specs for Full Model Training

Full Model Training is a resource-intensive process in which an AI model is trained on an enormous dataset, usually requiring enterprise hardware.

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Small | 24 GB | RTX 4090 (GDDR6X) |

| Mid level | 48 GB | RTX 6000 Ada |

| Large | 80 GB | H100 (80GB HBM3) / A100 80GB |

| Very Large | >160 GB | Multi-node H100 / H200 |

Best GPU Specs for NLP & LLM Inference (Llama 3, Mistral, Qwen)

Considering the Best GPU for NLP & LLM inference, VRAM is the critical factor, with memory bandwidth coming next.

NVIDIA RTX 3060 and 4060 Ti are the best shot for small models, NVIDIA RTX 4090 (24GB GDDR6X) is the industry standard for up to 13B models, and NVIDIA RTX 4060 Ti (16GB) is the budget-friendly GPU for Llama 3 and Mistral.

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Entry level (<7B) | 8–16 GB | RTX 3060 / RTX 4060 Ti |

| Mid level (7B-13B) | 24-32 GB | RTX 4090, RTX 5090 |

| Enterprise level | 80 GB | A100 80 GB, H200 80 GB |

Best GPUs for SDXL / Diffusion Models

CUDA and Tensor cores, along with modest VRAM, are the ultimate bundle you should look for in the best GPU for SDXL / Diffusion Models.

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Basic SDXL generation | 12 GB | RTX 3060 12 GB |

| Mid-level SDXL | 16 GB | RTX 4070 Ti / 4080 |

| Heavy SDXL (local inference) | 24 GB | RTX 4090 |

| Enterprise level | 80 GB | A100 / H100 |

Best GPUs for AI Video Generation (Kling, Sora-style pipelines)

VRAM, bandwidth, and Tensor cores are primary factors in choosing the best GPU for AI video creation.

12 Up to 24GB VRAM can handle short, low-resolution clips (IG Reels, YT Shorts), 24-48 VRAM is sufficient for mid level, and enterprise-level pipelines require up to 80GB VRAM.

| Workload | VRAM | Recommended GPU |

|---|---|---|

| Entry level | 16-24 GB | RTX 5080/3090/4090 |

| Mid level | 32 GB | RTX 5090 |

| Mid to Enterprise level | 48 GB | RTX 6000 Ada |

| Enterprise level | 80 GB | NVIDIA A100 |

VRAM Requirements for 2026 AI Models

The exact AI model setup, specifically VRAM requirements, for each AI model depends on your budget, infrastructure, workload, pipelines, and other factors.

But for a quick scan, below are the general VRAM requirements for 2026 AI models:

| Workload | VRAM Requirements | Best for | Recommended GPU |

|---|---|---|---|

| Starter | 8-16 GB | LLM/ NLP/ Fine-Tuning | RTX 3060/3080/4060 |

| Professional | 16-32 GB | SDXL/ Stable Diffusion/ Fine-Tuning | RTX 4070 Ti Super/4090/5090 |

| Enterprise | 80 GB | Video Generation/ Full Model Training | A100/ H100 |

Recommended GPU + CPU Pairings for Workflows

Consider that the GPU + CPU pairing also depends on your AI workload, budget, and primary goal.

The pairing setups provided below are recommended and budget-friendly:

Entry-Level (Hobbyists, Consumers)

- NVIDIA RTX 3060 + AMD Ryzen 5 7600X

- NVIDIA RTX 4060 Ti + Intel Core i5-14600K

Mid-Level (Fine-Tuning, Generative AI)

- NVIDIA RTX 4080 Super + AMD Ryzen 7 9800X3D

- NVIDIA RTX 4090 + AMD Ryzen 9 9950X, or Intel Core Ultra 9 285K

- NVIDIA RTX 5090 + AMD Ryzen 7 9800X3D

Enterprise (Research, Large Model Training)

- GPU: NVIDIA A100/ H100/ H200

- CPU: Intel Core i9-14900K, AMD Ryzen 9 9950X, Threadripper Pro (for multi-GPU setup)

Final GPU Recommendations by Budget

| Price Range | Recommended GPU | |

|---|---|---|

| <$250 | Nvidia RTX 5050 | Intel Arc B580 12GB |

| $250-500 | AMD Radeon RX 9060 XT (16GB) | Nvidia RTX 5060 Ti / 5060 |

| >$600 | AMD Radeon RX 9070 / 9070 XT | Nvidia RTX 5070 / 5070 Ti / 5080/ 5090 |

FAQ

2. How much VRAM do I need for SDXL or Llama 3?

- For Llama3, 8–12GB is sufficient for entry level (<7BP), 24-32GB for mid-level (7-13BP), and +80 GB for enterprise level.

- For SDXL, 12GB is the starting point, 16GB for Mid-level SDXL, 24GB for heavy SDXL (local inference), and +80GB for enterprise level.

3. Is A40 better than A10 for fine-tuning?

Yes, A40 is better than A10 for fine-tuning. For example, A40 comes with 48GB VRAM and 10,752 CUDA cores, whereas A10 has 24GB VRAM and 9,216 CUDA cores.

4. Can I train models on an RTX 4090?

Yes, NVIDIA RTX 4090 is the best GPU for AI training for starters.

5. What is the difference between FP16, BF16, and TF32?

TF32 uses 19 bits of memory to show a floating-point number, while FP16 and BF16 only need 16 bits. This is why FP16 and BF16 are faster and need less memory than TF32.

For a better result, FP16 and BF16 are used together in mixed precision, for higher accuracy paired with speed.

6. Do Tensor Cores matter for inference or only training?

Tensor Cores are crucial for both training and inference.

7. When do I need an A100 or H100?

You need an A100 or H100 when your AI model outruns your medium-level resources, requiring enterprise infrastructure.

8. Is multi-GPU required for video models like Kling?

Not necessarily, an NVIDIA RTX 5090 would suffice for most of the AI mid-level video models, including Kling.

9. Which GPUs have FP64 Tensor Core?

Some of NVIDIA's data-center-level GPUs, such as H200, H100, A100, A30, and B200, come equipped with FP64 Tensor Core and are often labeled as "Double-Precision Tensor Core GPU".